No items found.

With American Sign Language being used as primary means of communication for around 500, 000 people in the US, as well as for people in Canada, Mexico and 20 other countries, the use of assistive technology can be helpful with providing an alternative option for communicating with people that do not know the language. This project delves into Sign Language Classification, which translates ASL to English text in real time.

Although this gesture-based language allows people to readily express ideas, overcoming obstacles brought on by challenges with hearing or speech, a major issue lies in that the vast majority of the global population does not know the language. The fact that there are more than 300 different sign languages in use worldwide, each of which requires a similar amount of practice to become fluent, may discourage the general public from learning it.

To counter this problem of accessibility, we can develop assistive technology with the use of machine learning and an action detection system. The idea is to reduce the dependency on ASL interpreters or written mediums to communicate with hearing people, and rather have an independent method of comfortably translating your signs into words they would understand

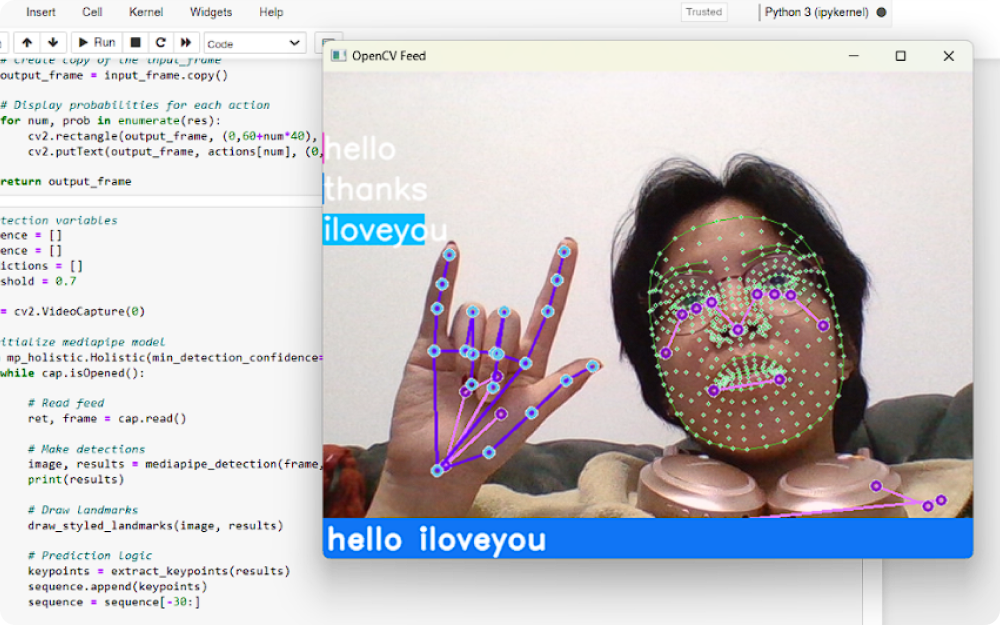

In short, the general objectives are to extract Holistic Keypoints with Mediapipe, make use of LSTM layers to build an American Sign Language model based on the concept of Action Detection, and predict basic ASL gestures in real time with the user’s webcam.